If you care about the online health of your website and its ability to be found in search by potential customers, then avoiding Google's "Low Quality" website list is crucial and something that can't be ignored.

For one, who wants their website to be recognized as low quality by the world's most dominating search engine? It's pretty obvious what this could mean for trying to outrank your competition. But how does a website get flagged as "low quality" and what's the impact of finding your way onto this list?

The "Low Quality" website list relates to a patent initially filed by Google all the way back in June 2012, and published in April 2015, that aims to analyze the link quality of resource links (such as footer or sidebar links that repeat on numerous pages of a website) that link to another website or internal page link.

According to a very thorough overview by Bill Slawski of SEO By The Sea, the Google patent assigns a quality score to resources that link to a site by counting the number of resources in each group, determining a link quality score for the site using the number of resources in each resource quality group. Being classified as a low quality score site can result in a decreasing of the ranking score for that search result by an amount based on the link quality score, following a formula listed in the patent.

To translate this, if your website is receiving a link that is being repeated multiple times from another low credibility website's footer or sidebar, you may be impacted.

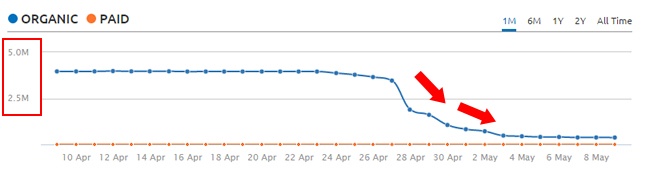

As noted in the patent, the "low quality list" was initially filed in June of 2012 and was published in its current form in April 2015. A Google algorithm update that has been nicknamed Phantom 2 started creating a stir the week of April 27 – which closely coincides with this patent being published. In comparison to other Panda-like updates, the Phantom 2 update (which apparently is driven by the "low quality" list) caused a 10-20% increase or decrease in website traffic for some sites.

The image here from G-Squared Interactive shows the impact of this type of ranking update on website traffic.

As Google reduces the rank of websites that appear to be low quality, the result is sites of higher quality move up in rankings. So, this isn't a malicious attack on Google's part, but more of their way of getting more deserving websites to rank where they should be.

Like I've covered in other blog posts, if you follow the rules spelled out by Google with their ongoing algorithm updates, you can avoid jeopardizing your website's credibility. But with this particular update you may have inadvertently broken the rules. Here's what to be aware of:

Boilerplate content (such as company press releases that use the same company description in every use), which often contain links, can be something that is seen as low quality since it's repeated over and over. Depending on how many press releases your company may have on a specific PR platform or publication that runs your press releases, this could cause some issues.

A proper precaution for these type of boilerplate links is to use no-follow links, which informs Google you're not trying to use these links for SEO value. Another option is to keep your links in the body of the press release since that content changes for each release.

Footer links such as copyright notices, sitemaps, or "built by/powered by" links often used by web developers or software providers, may be an indication of low quality links for the websites they link to. So again, use no-follow links for this type of thing or remove them if you can.

The same applies for sidebar links that are repeated on multiple pages, such as a blog with a list of different external resources. If you plan to use these make them no-follow links.

The crackdown on link quality and un-natural link building is something Google has been working to address and fight back on since the Panda and Penguin updates in 2011 and 2012. This most recent patent of determining the "low quality" website list based on the type of links noted above, and their frequency, is just the next evolution.

There are times when these types of links will be used in areas where they're repeatedly used, so be mindful of this and the SEO weight you put on them. As I've noted above, your safest best is to make them all no-follow so it's clear to Google that you don't intend to get SEO value for them.

If you're receiving these repeated links without your doing, you may need to do some outreach to have them removed or changed to no-follow. Otherwise, you may have to do some disavow link management.

Topics: Search Engine Optimization